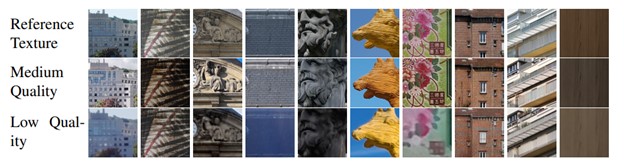

Images samples from the CARCO dataset

Motivation

We propose a data collection protocol tailored to evaluate learning-based camera-quality assessment methods. We collect 10 different scenes with many devices per scene with the same content, in different meteorological conditions. In every scene, a specific region of interest is selected. After a region alignment step, the images are shown to 22 annotators and quality scores are estimated using a pairwise comparison protocol. Besides, we also take a photograph of every texture with a digital single-lens reflex (DSLR) camera that provides a high-quality texture reference for each scene.

None of the existing datasets present these two characteristics simultaneously: (1) the distortions are authentic and (2) the same contents are repeated many times. This justifies the creation of a novel dataset presenting these two characteristics, tailored for the need of camera quality assessment.

Dataset’s organization

The data folder is organized the following way:

- Each scene’s samples are organized in folders containing the sample for the corresponding scene.

- The image samples are named numerically, except for the reference image which is named “REF” followed by the scene identifier

- Each scene folder contains the sample annotations, as well as the comparison matrix used to generate them.

- The annotations are mean-shifted: each scene annotations mean is 0.

Practical Usage

We provide here the necessary steps to produce a dataloader in pytorch of images from the dataset.

Here is an example of a dataloader of all scenes combined.

Let’s assume the dataset folder root is named src

src = os.path.join('your_path', 'CARCO')`

First we need a random crop function in order to provide the image to the standard input size of a convolutionnal neural network.

from random import randrange

def randomCrop(img, size = 224):

x,y,_ = img.shape

x1 = randrange(0, x - size)

y1 = randrange(0, y - size)

return(img[x1: x1 + size, y1: y1 + size,:])

Now the actual dataloader

class CARCOdataset(torch.utils.data.Dataset):

def __init__(self, dataframes, names, shuffle = True):

for i in range(len(dataframes)):

dataframes[i].loc[:, "Scene"] = np.repeat(i, len(dataframes[i]))

if shuffle:

self.dataframe = pd.concat(dataframes).sample(frac=1).reset_index(drop = True)

else:

self.dataframe = pd.concat(dataframes).reset_index(drop = True)

self.names = names

def __len__(self):

return len(self.dataframe)

def __getitem__(self, index):

row = self.dataframe.iloc[index]

img = Image.open(os.path.join(src, self.names, row["Name"])

img = randomCrop(img)

return(torchvision.transforms.functional.to_tensor(img, row["Score"]))

Here is how to initialize this dataloader in your training file:

SCENES = os.listdir(os.path.join(src))

listDatasets = [pd.read_csv(os.path.join(src, i, "eval.csv")) for i in SCENES]

dataset = CARCOdataset(listDatasets, SCENES)

Copyright (c) 2022 DXOMARK Image Labs

All rights reserved.

Permission is hereby granted, without written agreement and without license or royalty fees, to use, copy, modify, and distribute this database (the images, the results and the source files) and its documentation for the purpose of non-commercial research in the domain of image processing and computer vision only, provided that the copyright notice in its entirety appear in all copies of this database, and the original source of this database, DXOMARK IMAGE LABS (CARCO, https://corp.dxomark.com/dxomark-academy/carco) is acknowledged in any publication that reports research using this database.

The following papers are to be cited in the bibliography whenever the database is used as:

-

Marcelin Tworski, Benoit Pochon, Stéphane Lathuilière, “Camera Quality Assessment in Real-World Conditions”